Yesterday I read Melissa Terras On Making, Use and Reuse in Digital Humanities:

That's right. The code we made is now in use by another institution, to do

their own transcription project. Hurrah!

It was always our aim in Transcribe Bentham to provide the code to others: it

was a key part of our project proposal. But you always have to wonder if that

is going to happen. Its the kind of thing that everyone writes in project

proposals. And whilst lots of people talk about making things in Digital

Humanities, and whether or not you have to make things to be a Digital

Humanist, we've shied away - as a community - from the spectre of reuse: who

takes our code and reappropriates it once we are done? How can we demonstrate

impact through the things we've built being utilised beyond just us and

- quite frankly - our mates?

So I'm happy as larry that the code we developed, and the system we have

built, is both useful to us, but is now useful to others. I'm not sure how

much I want to prod the sleeping monster that is general code reuse in

Digital Humanities... dont draw attention to our deficiencies!

But I would be delighted if anyone else could point me to examples where code

and systems in Digital Humanities were repurposed beyond their original

project, just as we would wish?

I was unable to persuade Recaptcha to let me leave a comment and

congratulations on Melissa's blog, so am writing a brief post here. TL;DR

Transcribe Bentham: congrats! My own horn: toot! toot!

To my knowledge, no one has set up another instance of Pleiades as a gazetteer.

But code written for Pleiades gets reused more and more widely the further down

in our stack you look. I designed it to be modular and reusable – a stack of

tools, not a single tool – so I'd be disappointed if that wasn't the case. I'll

explain how it works for us.

Pleiades uses the Plone (and Zope) web application framework. Our Plone

products and packages have taken on a life of their own as the collective.geo project.

IW:LEARN is a good example

of a collective.geo site. Contributions to collective.geo by almost 20 other

people have been making Pleiades better. And this is just the start of our

reuse story.

Pleiades and collective.geo Zope and Plone packages are based on Python GIS

packages spun off from Pleiades such as Shapely, Rtree, and Geojson. At PyCon

a couple of weeks ago, I ran into a lot of Shapely users. I saw it mentioned on

slides in talks and on posters in the poster session. Famous web and geography

hackers even write about using Shapely from time to time.

Shapely feature-wise, Pleiades now gets as much back from others as we give

out.

This is a fantastic position to be in.

Digging deeper in the stack, I've contributed (as "sgillies") to the development of GEOS as

part of my work on Pleiades, so Pleiades has thereby played a tiny role in

making thousands of open source GIS programs and web sites more spatially

capable. A Python protocol for sharing geospatial data that we invented for

Pleiades has been implemented rather widely in GIS software. Anywhere you see

__geo_interface__ and shape() or asShape(), or programmers sharing

data as GeoJSON-like Python mappings,

that's the impact of Pleiades. The GeoJSON format

itself has some of its roots in Pleiades.

Even if no one ever sets up another Pleiades site, we're having a significant

impact on GIS software and systems, even on big time GIS software being used

for Spatial Humanities work. The keys to having a similar impact are, in my mind, 1)

modularization and generalization to increase the number of potential users and

contributors, and 2) a policy of open sourcing from day zero instead of open

sourcing after completion of the project – and after people have lost interest

and moved on to other software.

Comments

Re: Reintroducing a Python protocol for geospatial data

Author: `Stanley Fish <http://law.fiu.edu/faculty-2/stanley-fish/ >`__

Dear Mr. Gillies,

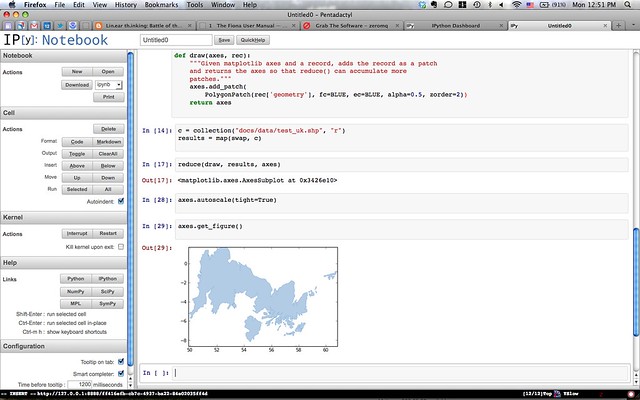

In truth it was your somewhat reductive blog post on Python's reduce() function on which I wished to comment, but I find myself thwarted by your practice of shutting down all reader commentary after a fortnight has passed -- something even I have not yet been accused of doing.

I thought you would like to know that I have made reference to your scintillating work on the Pleiades project in my fourth essay on the so-called "digital humanities" movement. These are to be found in my New York Times column (not to say, blog). I would be interested in your thoughts: http://bit.ly/H4Suf4

Sincerely,

Stanley Fish

Re: Reintroducing a Python protocol for geospatial data

Author: Sean

Ho, ho. For readers outside of academia: Stanley Fish is an English literature professor and opinion writer for the New York Times who has lately been winding up my digital humanities colleagues. Some Background: http://www.bogost.com/blog/this_is_a_blog_post_about_the.shtml.